AI Integration · Engineering

Thinking Models in 2026: The Adaptive Reasoning Revolution

Models that think before they answer are reshaping AI engineering. We break down how extended thinking, reasoning budgets, and chain-of-thought inference work across Claude, OpenAI o3, Gemini, and DeepSeek-R1 — and when you should actually use them.

Anurag Verma

17 min read

We have been building AI-powered features into client products for a while now. For most of 2024 and early 2025, the workflow was straightforward: send a prompt, get a response, ship it. The models were fast, the APIs were simple, and the results were good enough for most use cases.

Then thinking models showed up and changed the equation entirely.

Starting with OpenAI’s o1 in late 2024 and accelerating through 2025 into 2026, every major lab released models that can reason through problems step by step before producing an answer. Anthropic’s extended thinking in Claude, OpenAI’s o3 and o4-mini, Google’s Gemini 2.5 Flash Thinking (and now Gemini 3 Deep Think), DeepSeek-R1 — the list keeps growing. These models do not just pattern-match against training data. They allocate compute to actually think through a problem, and the difference in output quality on hard tasks is staggering.

But here is the thing nobody tells you upfront: thinking models are not always the right choice. They are slower, more expensive, and sometimes overthink simple problems. The real skill in 2026 AI engineering is knowing when to let your model think and when to keep it fast.

This post is our field guide. We will cover how these models work under the hood, compare every major thinking model available today, show you the actual API code to enable reasoning across providers, and share the decision framework we use at CODERCOPS to pick the right model for every task.

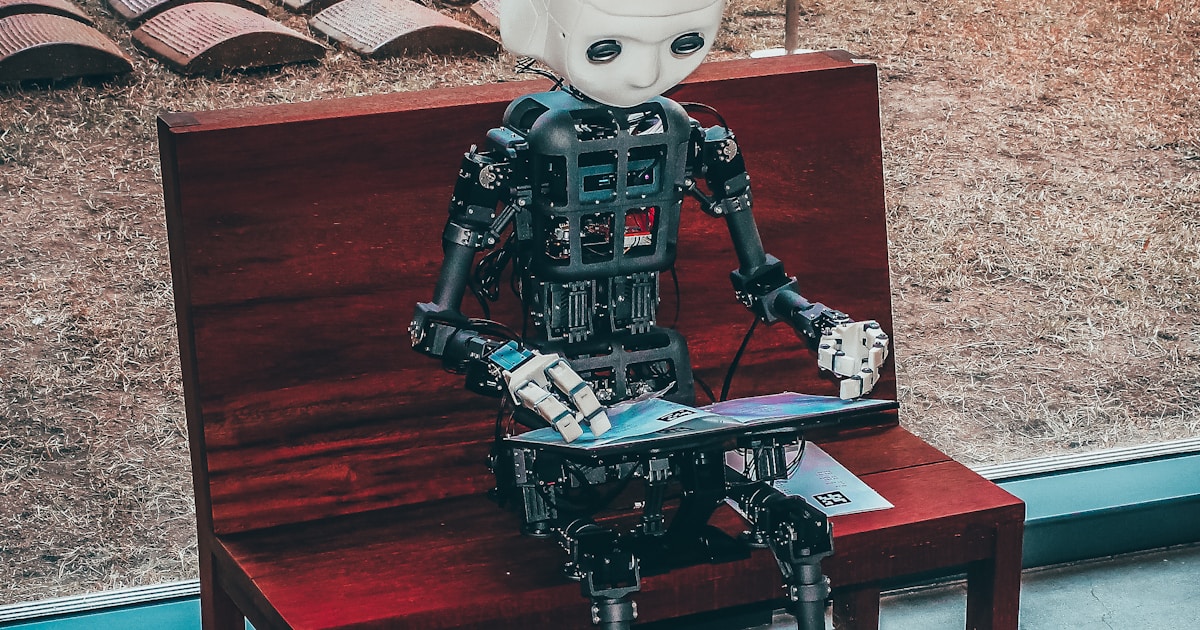

Thinking models allocate extra compute to reason through problems before responding

Thinking models allocate extra compute to reason through problems before responding

What Are Thinking Models?

At their core, thinking models are large language models that perform explicit chain-of-thought reasoning before generating their final output. Instead of producing tokens in a single forward pass from prompt to response, they first generate an internal reasoning trace — a sequence of intermediate steps where the model works through the problem.

Think of it like the difference between a student who blurts out an answer immediately versus one who grabs scratch paper, works through the logic, and then writes their final answer. Both might get easy questions right, but the second student crushes the hard ones.

Here is a simplified view of how the process works:

┌─────────────────────────────────────────────────────┐

│ STANDARD MODEL │

│ │

│ User Prompt ──────────────────────► Final Answer │

│ (single forward pass) │

└─────────────────────────────────────────────────────┘

┌─────────────────────────────────────────────────────┐

│ THINKING MODEL │

│ │

│ User Prompt ──► [Thinking Tokens] ──► Final Answer│

│ │ │ │

│ │ Step 1: Parse the problem │

│ │ Step 2: Identify constraints │

│ │ Step 3: Consider approaches │

│ │ Step 4: Evaluate tradeoffs │

│ │ Step 5: Verify solution │

│ │ │ │

│ └────────────────┘ │

│ (extended reasoning) │

└─────────────────────────────────────────────────────┘The thinking tokens are generated by the model but are typically hidden from the end user (or shown as a summary). You pay for them in compute and latency, but the payoff is significantly better accuracy on tasks that require multi-step reasoning, planning, mathematical proof, code debugging, or nuanced analysis.

How Is This Different from “Think Step by Step” Prompting?

Good question — and it trips up a lot of developers. Prompting a standard model with “think step by step” does improve results somewhat. The model generates reasoning tokens that are visible in its output. But there is a fundamental difference: it is still using the same inference pipeline. The reasoning is cosmetic, not architectural.

Thinking models, by contrast, are trained specifically to reason. OpenAI’s o-series models use reinforcement learning to optimize the quality of their chain-of-thought process. DeepSeek-R1 was trained via large-scale RL without supervised fine-tuning, learning to reason from scratch. Claude’s extended thinking dedicates a separate budget of tokens purely to internal reasoning before the response begins.

The result is that thinking models catch their own mistakes, reconsider dead-end approaches, and self-correct — things that “think step by step” prompting rarely achieves reliably.

The 2026 Thinking Model Landscape

The market has matured rapidly. Here is where things stand as of February 2026:

| Model | Provider | Reasoning Approach | Key Strength | Budget Control | Open Source |

|---|---|---|---|---|---|

| Claude Opus 4.6 | Anthropic | Extended thinking with adaptive mode | Complex analysis, coding, safety-aware reasoning | budget_tokens (min 1024) + adaptive mode | No |

| Claude Sonnet 4.6 | Anthropic | Extended thinking + interleaved thinking | Fast reasoning with good cost balance | budget_tokens + effort levels | No |

| OpenAI o3 | OpenAI | Native reasoning tokens | SOTA on math, code, science benchmarks | reasoning_effort (low/medium/high) | No |

| OpenAI o4-mini | OpenAI | Optimized reasoning | Fast, cost-efficient reasoning at scale | reasoning_effort (low/medium/high) | No |

| Gemini 3 Deep Think | Deep Think mode | Science, research, engineering problems | thinking_level (low/high) | No | |

| Gemini 2.5 Flash | Thinking mode with budget | High-volume, low-latency reasoning tasks | thinking_budget (token count) | No | |

| DeepSeek-R1 | DeepSeek | RL-trained CoT (671B MoE) | Math and code reasoning, MIT licensed | N/A (always reasons) | Yes (MIT) |

| QwQ-32B | Alibaba | Distilled reasoning | Strong reasoning in a compact 32B model | N/A | Yes |

What Changed in 2026

The biggest shift is convergence. In 2025, reasoning was a separate product line — you had to choose between “fast model” and “thinking model.” In 2026, the flagship models from every major lab bake reasoning in as a controllable feature. Claude Opus 4.6 introduced adaptive thinking, where the model itself decides how much to reason based on query complexity. OpenAI’s GPT-5 series integrates reasoning natively. Gemini 3 Pro includes Deep Think as a mode you can toggle.

This means the question is no longer “which model should I use?” but rather “how much reasoning should I allocate for this specific task?”

How Adaptive Reasoning Budgets Work

This is the concept that matters most for practical AI engineering in 2026. Every thinking model now gives you some form of control over how hard the model thinks.

Adaptive reasoning budgets let you dial reasoning depth up or down per request

Adaptive reasoning budgets let you dial reasoning depth up or down per request

The Core Idea

Not every request needs deep reasoning. Asking “What is the capital of France?” does not need 10,000 thinking tokens. But asking “Find the bug in this 200-line async Python function that intermittently deadlocks under load” absolutely does.

Adaptive reasoning budgets let you — or the model itself — decide how many tokens to spend on thinking before generating the final answer. The tradeoffs are straightforward:

More Thinking Tokens

▲

│ ● Higher accuracy on hard tasks

│ ● Better self-correction

│ ● More thorough analysis

│ ● Catches edge cases

│

│ BUT

│

│ ● Higher latency (seconds → minutes)

│ ● Higher cost (2-10x more tokens)

│ ● Diminishing returns on simple tasks

│ ● Can overthink straightforward queries

│

▼

Fewer Thinking TokensProvider-Specific Implementations

Each provider handles this differently. Here is how you control reasoning depth across the major APIs.

Code: Enabling Thinking Mode Across Providers

Anthropic Claude — Extended Thinking

Claude offers two approaches: explicit budget control and adaptive thinking.

import anthropic

client = anthropic.Anthropic()

# Approach 1: Explicit thinking budget

# You set the maximum tokens the model can use for reasoning

response = client.messages.create(

model="claude-sonnet-4-6",

max_tokens=8000,

thinking={

"type": "enabled",

"budget_tokens": 4000 # Up to 4000 tokens for thinking

},

messages=[{

"role": "user",

"content": "Find the race condition in this code..."

}]

)

# Approach 2: Adaptive thinking (recommended for Claude Opus 4.6)

# The model decides how much to think based on complexity

response = client.messages.create(

model="claude-opus-4-6",

max_tokens=16000,

thinking={

"type": "adaptive" # Model self-regulates reasoning depth

},

messages=[{

"role": "user",

"content": "Analyze this system architecture for bottlenecks..."

}]

)

# Accessing the thinking output

for block in response.content:

if block.type == "thinking":

print(f"Thinking: {block.thinking}") # Internal reasoning

elif block.type == "text":

print(f"Answer: {block.text}") # Final responseOpenAI o3 / o4-mini — Reasoning Effort

OpenAI uses a reasoning_effort parameter with three levels.

from openai import OpenAI

client = OpenAI()

# Using the Responses API (recommended for o3/o4-mini)

response = client.responses.create(

model="o3",

input=[{

"role": "user",

"content": "Prove that there are infinitely many primes."

}],

reasoning={

"effort": "high", # low | medium | high

"summary": "auto" # Get a summary of reasoning steps

}

)

# Using o4-mini for cost-efficient reasoning at scale

response = client.responses.create(

model="o4-mini",

input=[{

"role": "user",

"content": "Optimize this SQL query for a table with 50M rows..."

}],

reasoning={

"effort": "medium" # Balance speed and depth

}

)

# Access reasoning summary

for item in response.output:

if item.type == "reasoning":

print(f"Reasoning summary: {item.summary}")

elif item.type == "message":

print(f"Answer: {item.content[0].text}")Google Gemini — Thinking Budget

Gemini 2.5 models use a token-based thinking budget. Gemini 3 models use a thinking level.

from google import genai

from google.genai import types

client = genai.Client()

# Gemini 2.5 Flash — token-based budget

response = client.models.generate_content(

model="gemini-2.5-flash",

contents="Explain the P vs NP problem and its implications.",

config=types.GenerateContentConfig(

thinking_config=types.ThinkingConfig(

thinking_budget=4096 # Max tokens for thinking

# Set to 0 to disable, -1 for dynamic

)

)

)

# Gemini 3 Pro — level-based control

response = client.models.generate_content(

model="gemini-3-pro",

contents="Design a distributed consensus algorithm for this use case.",

config=types.GenerateContentConfig(

thinking_config=types.ThinkingConfig(

thinking_level="HIGH" # LOW or HIGH

)

)

)

# Access thinking content

for part in response.candidates[0].content.parts:

if part.thought:

print(f"Thinking: {part.text}")

else:

print(f"Answer: {part.text}")DeepSeek-R1 — Always-On Reasoning

DeepSeek-R1 is different: it always reasons. The chain-of-thought is baked into the model’s output, wrapped in <think> tags.

from openai import OpenAI

# DeepSeek uses an OpenAI-compatible API

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="your-deepseek-key"

)

response = client.chat.completions.create(

model="deepseek-reasoner",

messages=[{

"role": "user",

"content": "Solve this competitive programming problem..."

}]

)

# R1 returns reasoning in the message content between <think> tags

# The reasoning_content field contains the chain-of-thought

print(response.choices[0].message.reasoning_content) # Thinking

print(response.choices[0].message.content) # AnswerWhen to Use Thinking Models (and When Not To)

This is the section we wish someone had written for us six months ago. After deploying thinking models across dozens of client projects, we have developed a clear decision framework.

Use Thinking Models For

Complex code tasks. Bug hunting, architecture review, refactoring suggestions, and writing algorithms with tricky edge cases. We saw a 40% reduction in back-and-forth iterations when we switched code review agents from standard to thinking models.

Mathematical and logical reasoning. Anything involving proofs, multi-step calculations, statistical analysis, or constraint satisfaction. Standard models frequently make arithmetic errors or skip logical steps. Thinking models catch and correct these.

Multi-step planning. When the model needs to decompose a goal into ordered subtasks, evaluate dependencies, and produce a coherent plan. This is critical for agentic workflows where the AI is executing a sequence of tool calls.

Ambiguous or underspecified problems. When the prompt could be interpreted multiple ways, thinking models are better at identifying the ambiguity, considering alternatives, and either asking for clarification or choosing the most reasonable interpretation.

High-stakes outputs. Legal analysis, medical information, financial calculations, security audits — anywhere a wrong answer has real consequences. The self-verification that thinking models perform significantly reduces hallucination rates.

Do NOT Use Thinking Models For

Simple retrieval and formatting. “What is the capital of France?” or “Convert this JSON to YAML.” Standard models handle these instantly and perfectly. Thinking models add latency for zero benefit.

High-volume, low-complexity tasks. Chat auto-responses, content tagging, basic classification, data extraction from structured documents. Use a fast model (Claude Haiku, GPT-4o-mini, Gemini Flash) and save your budget.

Real-time user-facing interactions. If users expect sub-second responses — autocomplete, inline suggestions, chat typing indicators — thinking models will feel sluggish. Users will notice and complain.

Creative writing and brainstorming. This one surprises people. Thinking models can actually overthink creative tasks, producing overly structured and analytical output. Standard models often produce more natural, creative results for open-ended writing.

The Hybrid Architecture Pattern

The approach we recommend to most clients is a router pattern: use a lightweight classifier (or the model’s own adaptive reasoning) to decide the reasoning depth per request.

┌──────────────┐

│ User Query │

└──────┬───────┘

│

▼

┌──────────────┐ Simple ┌──────────────────┐

│ Complexity │───────────────►│ Standard Model │

│ Classifier │ │ (fast, cheap) │

└──────┬───────┘ └──────────────────┘

│

│ Complex

▼

┌──────────────────┐

│ Thinking Model │

│ (slower, better) │

└──────────────────┘In practice, we often implement this using adaptive thinking modes where available (Claude’s "type": "adaptive") or by running a cheap model first to score complexity, then routing to a thinking model only when the score exceeds a threshold.

// Simplified router pattern

async function routeQuery(query: string): Promise<string> {

// Step 1: Quick complexity check with a fast model

const complexity = await classifyComplexity(query); // returns 1-5

// Step 2: Route based on complexity

if (complexity <= 2) {

return callStandardModel(query); // Fast, cheap

} else if (complexity <= 4) {

return callThinkingModel(query, { budget: "medium" });

} else {

return callThinkingModel(query, { budget: "high" });

}

}Benchmarks: Does Thinking Actually Help?

The numbers speak for themselves. Here are benchmark comparisons that show where thinking models earn their keep — and where they do not.

Where Thinking Models Dominate

| Benchmark | Standard Model | Thinking Model | Improvement |

|---|---|---|---|

| AIME 2024 (math competition) | ~30% (GPT-4o) | ~79.8% (DeepSeek-R1) | +166% |

| ARC-AGI (novel reasoning) | ~5% (GPT-4o) | ~87.5% (o3 high) | +1650% |

| SWE-bench Verified (real bugs) | ~33% (GPT-4o) | ~69% (o3) | +109% |

| GPQA Diamond (PhD-level science) | ~53% (GPT-4o) | ~79% (o3) | +49% |

Where Standard Models Are Fine

| Task | Standard Model | Thinking Model | Verdict |

|---|---|---|---|

| Text summarization | ~92% quality | ~93% quality | Not worth the cost |

| Simple Q&A | ~95% accuracy | ~96% accuracy | Marginal gain |

| Translation | ~90% BLEU | ~91% BLEU | Not worth the latency |

| Content generation | Subjectively preferred | Often over-structured | Standard wins |

The pattern is clear: thinking models provide massive gains on tasks that require genuine reasoning, but offer diminishing returns on tasks that are primarily about language fluency and pattern matching.

Building Production Systems with Thinking Models

Here are practical lessons we have learned deploying thinking models in production.

1. Set Reasonable Token Budgets

Do not just set budget_tokens to the maximum and forget about it. We profile our queries and set budgets based on actual task complexity. For Claude, we typically use:

- Simple analysis: 1,024-2,048 thinking tokens

- Code review: 4,096-8,192 thinking tokens

- Complex architecture planning: 8,192-16,384 thinking tokens

- Deep research synthesis: 16,384+ thinking tokens

2. Do Not Over-Prompt Thinking Models

This catches a lot of developers off guard. Traditional prompt engineering techniques — “think step by step,” “consider all angles,” “break this into sub-problems” — can actually hurt performance with thinking models. They already know how to reason. Over-prompting makes them over-think or creates conflicting instructions with their internal reasoning process.

Keep prompts for thinking models simple and direct. State the problem clearly, provide the necessary context, and let the model’s reasoning do the work.

3. Stream Thinking Tokens for UX

If you are using thinking models in user-facing applications, stream the thinking process to show the user that work is happening. A blank screen for 15 seconds feels broken. A visible chain-of-thought that unfolds in real time feels impressive.

# Stream extended thinking with Claude

with client.messages.stream(

model="claude-sonnet-4-6",

max_tokens=8000,

thinking={"type": "enabled", "budget_tokens": 4000},

messages=[{"role": "user", "content": query}]

) as stream:

for event in stream:

if event.type == "content_block_start":

if event.content_block.type == "thinking":

print("Reasoning: ", end="", flush=True)

elif event.type == "content_block_delta":

if hasattr(event.delta, "thinking"):

print(event.delta.thinking, end="", flush=True)

elif hasattr(event.delta, "text"):

print(event.delta.text, end="", flush=True)4. Cache Thinking Results

Thinking tokens are expensive. If you have queries that recur with similar patterns, cache the results. This is especially important for code analysis tools where multiple users might analyze the same codebase or where the same architecture questions come up repeatedly.

5. Monitor and Optimize

Track three metrics for every thinking model deployment:

- Thinking token ratio: How many thinking tokens vs. output tokens? If the ratio is consistently above 10:1, you might be using too much reasoning budget.

- Quality delta: A/B test thinking vs. non-thinking for your specific use case. If the quality improvement is under 5%, switch to a standard model.

- User-perceived latency: Measure time-to-first-token and total response time. Set SLOs and alert when thinking models push you past acceptable thresholds.

What Is Coming Next

The trajectory is clear. By the end of 2026, we expect:

Reasoning will be fully invisible. The distinction between “thinking” and “non-thinking” models will disappear. Every model will reason adaptively, spending more compute on hard problems and less on easy ones, without developers needing to configure anything.

Smaller models will reason better. DeepSeek’s distilled R1 models (as small as 1.5B parameters) already show that reasoning capability can be compressed. Expect reasoning-capable models that run on edge devices and mobile phones.

Reasoning traces will become a product feature. Users will want to see how the AI arrived at its answer, especially in high-stakes domains like healthcare, legal, and finance. Explainable reasoning is a competitive advantage.

Cost will drop dramatically. As inference optimization improves and competition intensifies, the premium for thinking models will shrink. Techniques like Adaptive Reasoning Suppression (ARS) — which reduces redundant reasoning steps by up to 53% — will become standard.

The Bottom Line

Thinking models are not a gimmick. They represent a genuine architectural shift in how AI systems solve problems. The models that think before they answer produce measurably better results on anything that requires real reasoning — math, code, planning, analysis, and complex decision-making.

But they are a tool, not a silver bullet. The developers and teams that will get the most value from thinking models in 2026 are the ones who understand the tradeoffs, implement adaptive routing, set appropriate reasoning budgets, and measure the actual impact on their specific use cases.

At CODERCOPS, we have been integrating thinking models into client projects since the early days of o1, and the pattern is consistent: when you match the right reasoning depth to the right task, you get dramatically better results without blowing your budget.

If you are building AI-powered products and want to figure out where thinking models fit into your architecture — or if you have already deployed them and want to optimize cost and latency — get in touch with us. This is exactly the kind of engineering challenge we love solving.

More from this category

More from AI Integration

R.01

R.01 Google Workspace Gets a Major AI Boost: Gemini Now Powers Docs, Sheets, Slides & Drive

R.02

R.02 Agentic AI in 2026: Inside Google's Agent Leap Report and the Rise of Autonomous AI

R.03

R.03 Best AI Coding Models Compared: The Definitive February 2026 Guide

The dispatch

Working notes from

the studio.

A short letter twice a month — what we shipped, what broke, and the AI tools earning their keep.

Discussion

Join the conversation.

Comments are powered by GitHub Discussions. Sign in with your GitHub account to leave a comment.