Service · S.02

AI Development Services

Production AI features. Real evals. Real guardrails. Real ship.

How we work

The process we follow.

-

Step · 01

Eval first

Before we write a prompt, we write the evals. If we can't measure it, we can't ship it.

-

Step · 02

Cheapest model that works

We start with Haiku/4o-mini, only upgrade when an eval fails. Most production features don't need Opus.

-

Step · 03

Streaming + fallbacks

Every feature gets a streaming UI and a fallback plan for provider outages.

-

Step · 04

Ship + monitor

Deploy with cost guardrails, drift alerts, and a feedback loop into the eval set.

Pricing

Fair, fixed, written down.

Starts at

$3,500

Typical timeline

2–5 weeks

Package · 01

AI feature spike

$3,500

1–2 weeks

- 1 feature scoped + shipped

- Eval harness

- Cost + latency baseline

Package · 02

AI product build

$8,000

3–5 weeks

- Full feature suite

- Provider abstraction

- Drift monitoring

- Production guardrails

Package · 03

AI partnership

$12,000+

Ongoing

- Continuous shipping

- Eval pipeline

- Prompt + model upgrades

- On-call AI engineer

Press clippings

What clients actually said.

“Finding someone who can actually ship LLM features in production is rare. The studio shipped, then helped me hire a verified builder for the rollout.”

Alex Chen

CEO · Lore Protocol

“Working with CODERCOPS was seamless. They understood the nuances of AI-driven interviews and built a product that feels incredibly human. Our users love the realistic experience.”

Sarah Johnson

Founder · PrepAI

“QueryLytic has democratized data access across our organization. Marketing, sales, and ops teams can now get insights without waiting for engineering. CODERCOPS delivered beyond our expectations.”

Michael Torres

CTO · DataFlow Analytics

The toolkit

The stack we trust.

Models

- Anthropic Claude

- OpenAI GPT-4/5

- Gemini

- Open-source (Llama, Mistral)

Vector / Retrieval

- pgvector

- Pinecone

- Qdrant

- Weaviate

Frameworks

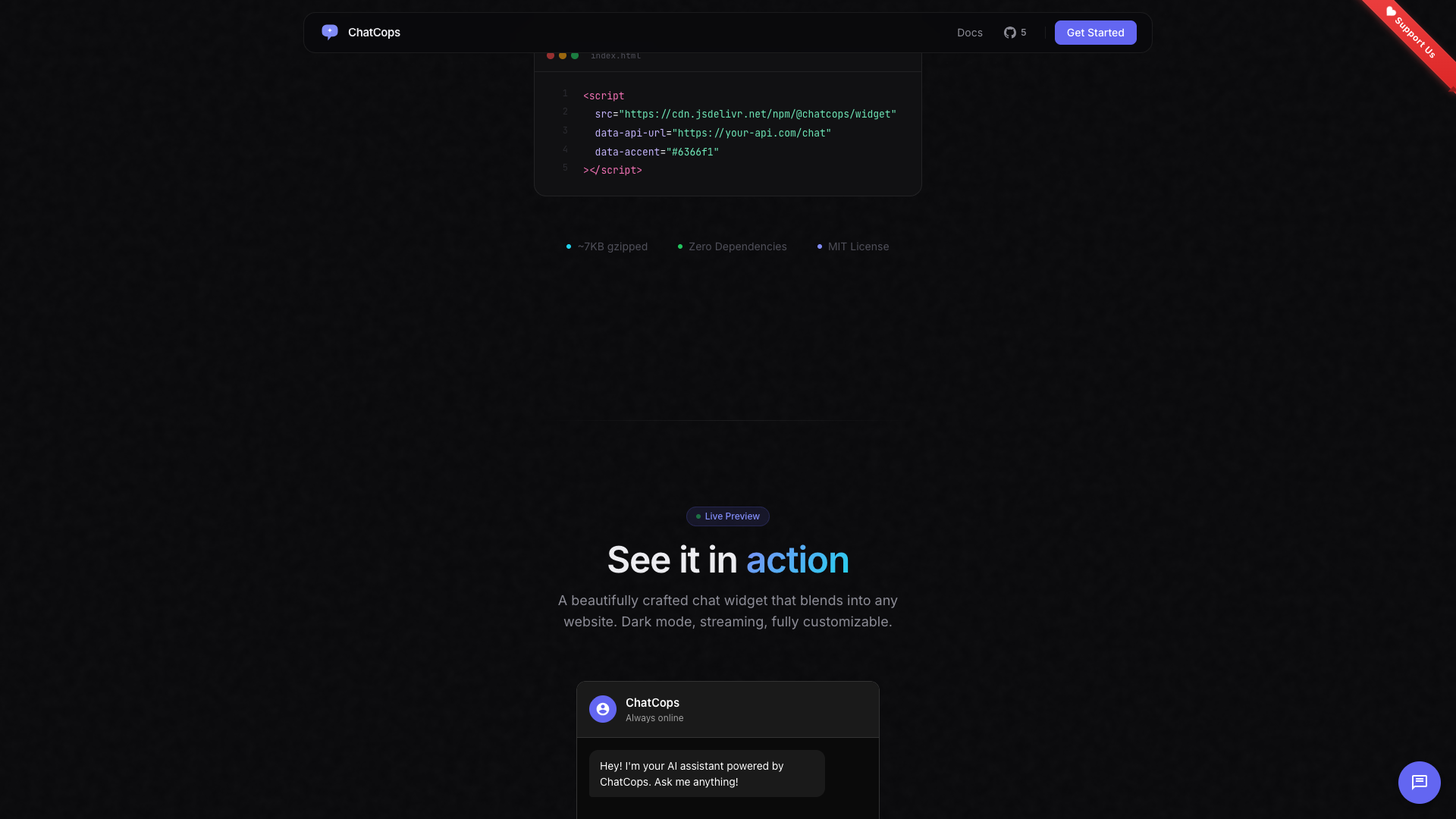

- Vercel AI SDK

- LangChain

- LlamaIndex

- Custom

Eval / Obs

- Braintrust

- LangSmith

- Helicone

- Custom

Boring choices on purpose. Plain-stack code outlives the consultant. If you have a stack already, we'll meet you there.

What “production AI” actually means

A demo can hallucinate and nobody cares. A production feature can hallucinate once, on the wrong customer, and the support tickets pile up forever.

The difference between a hackathon prompt and a production AI feature is the unglamorous stuff: evaluation harnesses, structured output validation, provider fallbacks, cost guardrails, drift monitors, and prompt versioning. None of it is hard. All of it is necessary.

What we build

- Copilots and assistants. Embedded in your product UI. Aware of context. Know when to call a tool, when to defer to a human, when to refuse.

- Document Q&A and search. RAG over your own corpus — manuals, support tickets, internal wikis, code, contracts. Cited answers, not vibes.

- Classifiers and extractors. Replace manual triage, tagging, and data entry with prompted models that match human accuracy at 1/100th the cost.

- Agentic workflows. Multi-step automations where the model picks tools, recovers from errors, and reports back. Bounded, observable, and debuggable.

- Content engines. Generate descriptions, summaries, translations, drafts — at quality your editors can actually use without a rewrite.

How we work

Our default stack is the Vercel AI SDK for streaming, pgvector for retrieval, and Braintrust for evals — but we’ll use whatever fits your existing infrastructure. We don’t push a stack; we push a process.

Every feature we ship comes with an eval set you can run yourself, a cost dashboard, and a prompt versioning system. When OpenAI raises prices or Anthropic releases Sonnet 5, you’ll know within a day whether to switch.

Common questions

Things people ask first.

It depends on the task. We start cheap (Haiku, GPT-4o-mini) and only upgrade if evals demand it. Most production features don't need a frontier model.

No. We use enterprise APIs with zero-retention contracts. Your data is yours.

Per-feature cost budgets in code. Hard cutoffs. Provider abstraction so we can swap models when pricing changes. Monthly cost reports.

RAG, grounding, structured output, and an eval harness that runs on every prompt change. We don't ship features that hallucinate critical data.

Rarely. In 2026, prompting + retrieval covers 95% of use cases. Fine-tuning is for narrow classification or style-mimicking, not knowledge.